A 3D Body Scan for Nine Cents — Without SMPL

Everyone in computer vision uses SMPL for human body reconstruction. There is one problem: SMPL has a non-commercial license that blocks production use, unless you pay a lot. For us — a tiny, two-person startup — it was out of reach.

Instead we built a fully commercial pipeline using Naver’s Anny and Meta’s MHR — both Apache 2.0. Both appeared in November 2025. The whole pipeline is cheap enough to become consumer-grade — and by consumer-grade I mean not just cost, but also time and UX. A normal person shouldn’t need instructions, a special photo, or more than a minute. Did we solve the holy grail of fashion? Here is how it works, what it costs, and where it breaks down.

The SMPL licensing trap

SMPL became the standard for body models. Almost all the research about Human Mesh Recovery (HMR) you read uses it under the hood. However, the license is very clear — while its source is available, it cannot be used for commercial purposes unless you get a commercial sub-license from Meshcapade — the exclusive sub-licensor appointed by Max Planck. Pricing has never been public; you email sales and negotiate. Recently Epic Games acquired Meshcapade (February 2026, closing April), so the future of SMPL commercial licensing is unclear. Either way — not an option for a two-person startup.

The SMPL license also prohibits training neural networks for commercial use. Given how many commercial products rely on HMR models trained with SMPL supervision, the licensing gap in this space is wider than most people realize.

You need to pay when you create a 3D mesh from params. And here is the biggest trap: almost all work on body models at some point has SMPL params and performs a non-commercial conversion of them into a 3D mesh. HMR is a good example of this: most of the NNs do not predict a mesh but rather SMPL params, and only then create a mesh.

What’s more, it’s very often not clear that SMPL is used there. Even things you would never suspect, like Naver’s MultiHMR — which does have Anny-based checkpoints that skip SMPL entirely, but the model itself is non-commercial. So close, yet so far. The list goes on: HMR 2.0, TokenHMR, SMPLer-X, ROMP, HybrIK, WHAM — their code may be MIT or Apache 2.0, but they all output SMPL params. To get a mesh from those params you need the SMPL model files, and those are non-commercial.

The landscape (March 2026)

Two body models changed everything in late 2025. Both Apache 2.0.

Anny from Naver Labs Europe. The big deal: its 11 shape params actually mean something — gender, age, weight, height, muscle, cup size. When a customer asks “will this fit my hips?” you need params like these, not abstract PCA coefficients. On top of that it has 256 local change blendshapes for things like waist circumference or breast volume. 14K vertices, 127 joints, fully differentiable. Great work from the HUMANS team.

MHR from Meta. Different philosophy: 45 abstract shape coefficients. Shape param #37 means nothing to a human. But the mesh quality is excellent — 18K vertices, 127 joints, 7 LOD levels. And critically: Meta’s SAM 3D Body outputs MHR params directly. Right now it’s the best single-image HMR you can use commercially.

We use both. MHR because it’s the only body model with a commercial HMR path (SAM 3D Body). Anny for semantic understanding and fit guidelines. The bridge between them is our own MHR→Anny conversion — more on that below.

| SMPL/SMPL-X | MHR | Anny | |

|---|---|---|---|

| License | Non-commercial (Meshcapade for commercial) | Apache 2.0 | Apache 2.0 |

| Vertices | 6,890 / 10,475 | 18,439 | 13,718 |

| Shape params | 10 / 10 (abstract PCA) | 45 (abstract) | 11 semantic + 256 local |

| Param meaning | None | None | Human-readable |

| HMR support | Almost everything | SAM 3D Body | MultiHMR (non-commercial) |

| Production-ready | Behind paywall | Yes | Yes |

How it works

We decided to build the whole pipeline around Anny as the target body model. The semantic params are the reason — they don’t just describe the body, they let you manipulate it meaningfully. Want to tune a specific region like waist or bust? Change one param. Want to predict how a body looks two kilos lighter, or two months into pregnancy? You can do that too. No other body model gives you this.

The pipeline has two input paths that converge into the same downstream flow: body mesh → measurements → measurement tuning → physics draping.

graph TD

P[Photo] --> SAM[SAM 3D Body]

SAM --> MHR[MHR]

MHR --> CONV[MHR → Anny]

CONV --> M[Measurements]

Q[Questionnaire] --> A[Anny]

A --> M

M --> T[Measurement tuning]

T --> D[Physics draping]

The photo path starts with SAM 3D Body. Given the SMPL trap, we had a hard time finding a commercially usable HMR model. We’re optimistic about Naver’s MultiHMR on Anny-only params, but it’s also non-commercial. Fortunately, in late 2025, Meta published SAM 3D Body — single photo in, MHR body params out, 12GB VRAM. A sharp eye can spot one thing though: SAM 3D outputs MHR params while Anny is a different model entirely. So the photo path needs a bridge — we built our own MHR→Anny converter since Anny’s built-in regressor gave quite poor results. Recovering from a photo also has one fundamental flaw — it’s impossible to determine absolute measurements of the person. Even with one known measurement, pose, lighting, or camera lens can throw off the rest.

The questionnaire path is different. We trained a model that predicts Anny body params directly from 8 inputs — height, weight, gender, body shape, and a few more. No photo, no GPU, <1s inference. More on the questionnaire in the next post.

Both paths converge at measurement tuning. This is the key insight of the whole pipeline. No single method — photo or questionnaire — nails body measurements on the first attempt. The goal isn’t to get 1 cm MAE from reconstruction alone. It’s to get a close enough body model that you can then tune to less than 1 cm. Given a reconstructed body and a few known measurements, tuning optimizes the body params to close the gap.

Once you have a tuned body mesh, the garment gets draped on it with physics. But that’s a different post.

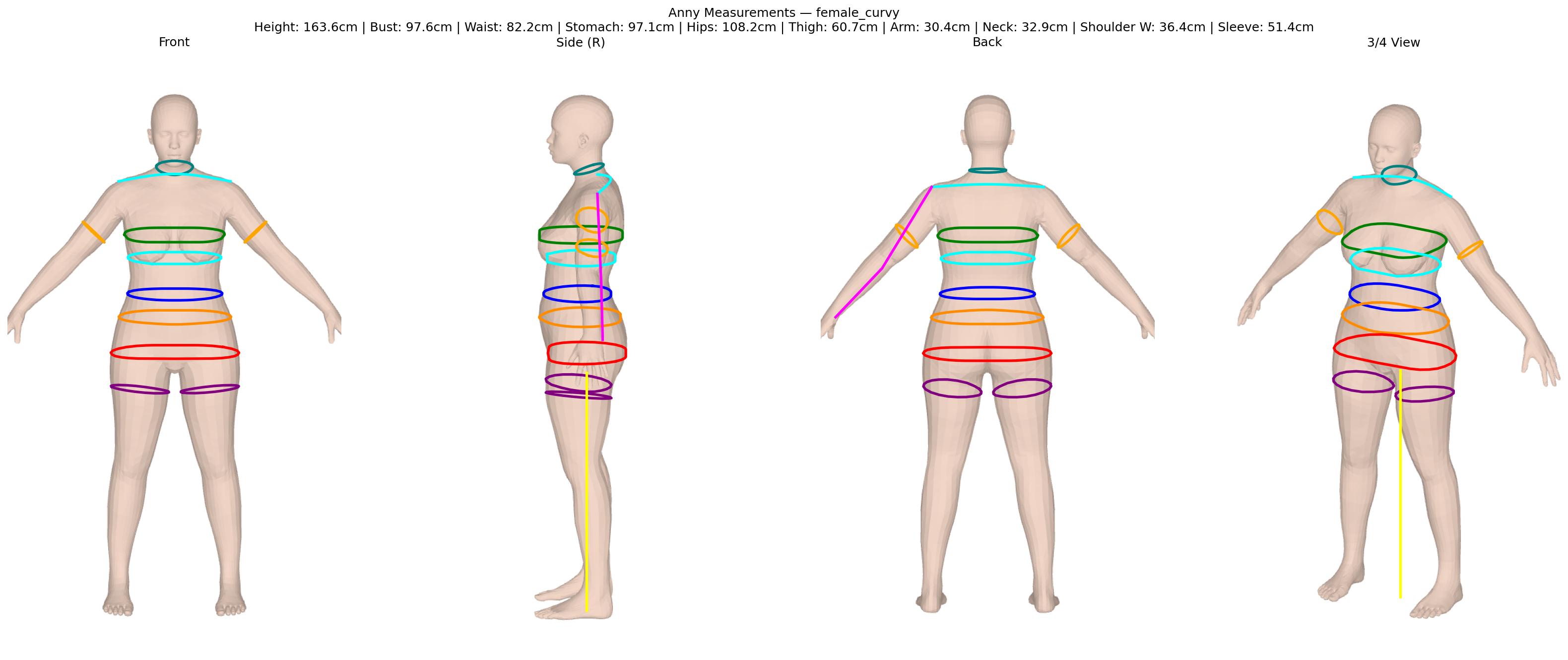

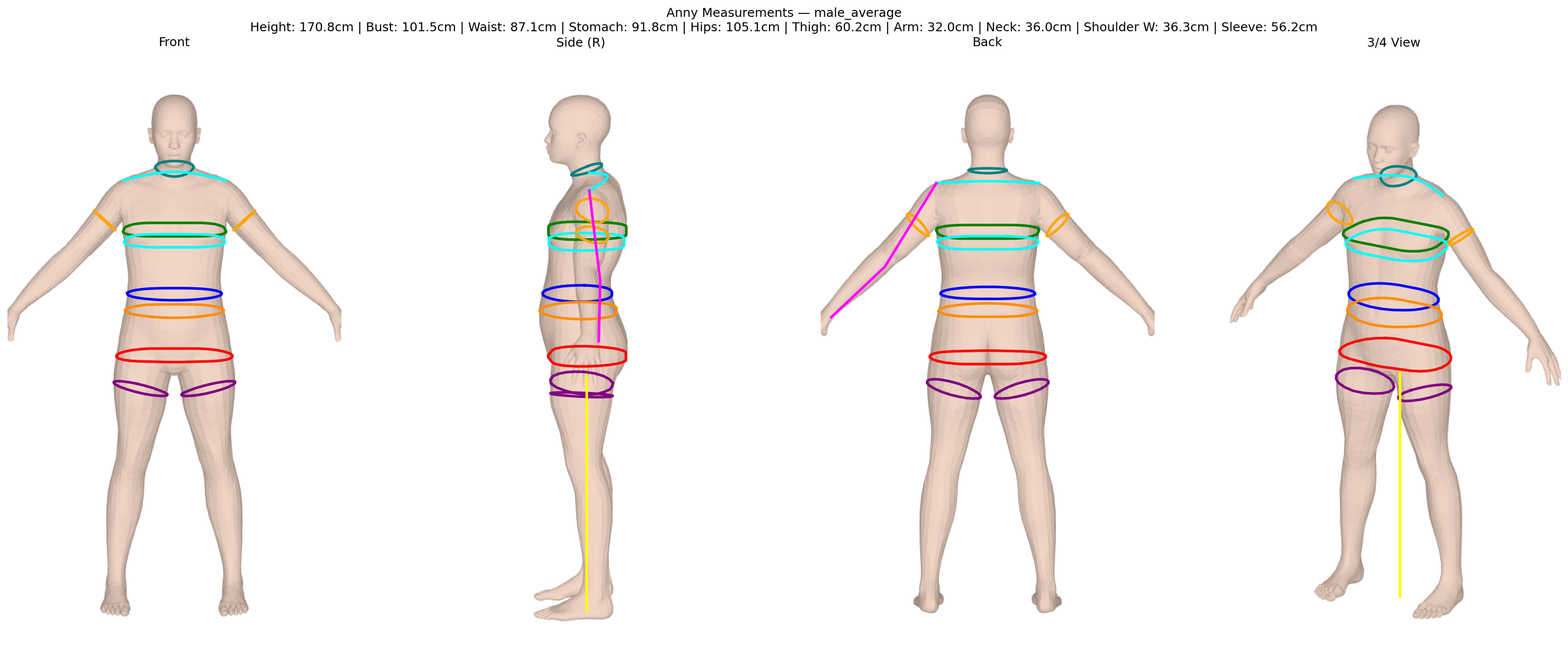

Here’s what the output looks like — an Anny body with circumference measurements:

What it costs to run

Our goal was simple: do it at almost no cost, as we don’t have many funds. The most expensive thing is obviously the GPU. We tried to avoid it as much as possible, but in some cases we had to use it. Here are real numbers from our GCP billing — not estimates, not projections.

The photo body reconstruction step (SAM 3D Body + MHR→Anny conversion) runs as a Cloud Run job with an L4 GPU at ~$0.67/hour. We ran it 30 times during development — about 61 minutes of total GPU time, ~$2.60 total (GPU + CPU + memory). That’s about $0.09 per run. And honestly — it’s not optimized. Each successful job takes about 5 minutes, but the actual compute (SAM 3D inference + fitting) is around 80 seconds. The rest is loading models — SAM 3D weights (~66s) and Anny blendshapes (~2 min) get re-downloaded on every cold start. With proper caching that should bring the total under 2 minutes and the cost to ~$0.03 per run.

And keep in mind — body reconstruction is a one-time thing per user. You build their body model once, then every try-on reuses it.

The questionnaire path is <1s, no GPU at all. Physics draping takes about 1 minute on an L4 — so another ~$0.01 per try-on. But unlike body reconstruction, draping runs on every garment the user tries.

Important caveat on the tables below: our numbers are raw infrastructure cost — no margin, no API layer, no support. The third-party prices are commercial APIs that include all of that. Still, the difference is large enough to matter.

Body scan and measurement APIs:

| Provider | Per-scan cost | What you get |

|---|---|---|

| 3DLOOK | $2-5 | Measurements from 2 photos |

| Meshcapade (SMPL commercial) | ~€1-5 | Body model (credit-based: 100-500 credits per avatar, €5/500 credits) |

| Bold Metrics | Contact sales | AI body data from questionnaire |

| Our pipeline, photo path (current, unoptimized) | ~$0.09 | 3D body + measurements |

| Our pipeline, photo path (optimized) | ~$0.03-0.04 | Same |

| Our pipeline, questionnaire path (no GPU) | <$0.01 | Same |

Virtual try-on (diffusion, 2D only — no measurements, no fit info):

| Provider | Per-image cost |

|---|---|

| FASHN | $0.049-0.075 |

| IDM-VTON on Replicate | ~$0.025 |

| Google Vertex AI VTO (Imagen) | $0.06 |

| Our pipeline (body recon + physics draping) | ~$0.10-0.15 |

Honest results

The standard way to evaluate HMR models is MPJPE (mean per-joint position error) and PVE (per-vertex error) — both in millimeters. SAM 3D Body scores well on these: ~55mm MPJPE on 3DPW, ~61mm PVE on RICH. But for size-aware VTO none of that matters. What matters is circumference MAE in centimeters — waist, bust, hips. That’s what tells you if a garment will fit.

The MHR→Anny conversion itself works well: ~10mm mean nearest-neighbor surface error across the body. The accuracy gap comes more from the upstream photo reconstruction than from the conversion step.

We’re still early on evaluation. We’ve tested the full photo pipeline on a couple of real people measured by hand with tape — not a large dataset, not final numbers. From these first results the BWH (bust-waist-hips) MAE is roughly 5-8 cm. Some measurements are much better than others — waist tends to be very close, bust is the weakest point.

For context: single-image body reconstruction from in-the-wild photos typically achieves 3-12 cm MAE on circumferences (survey). Controlled environments with synthetic data get 1.6-3 cm. Professional 3D body scanners get ±0.5-1.6 cm. We’re somewhere in the middle — and still evaluating.

The key insight: no single method nails all measurements on the first attempt. The goal isn’t 1 cm MAE from reconstruction alone. It’s to get a close enough body that measurement tuning can close the gap — and that’s what we do.

What we’d do differently

If starting over, we’d invest in reliable body measurement infrastructure from day one. Sounds obvious, but neither MHR nor Anny ship with a measurement library. You get a mesh with 14-18K vertices and no standard way to extract waist circumference from it. We had to build our own — ISO 8559-1 plane-sweep circumferences, landmark detection, contour separation. That measurement layer ended up being foundational for everything downstream: accuracy evaluation, measurement tuning, fit guidelines. Without it you’re guessing. We’re planning to open-source this as clad-body — because anyone working with MHR or Anny will hit the same wall.

The other surprise was UX. When you tell someone to upload a photo for body reconstruction (tight clothes, one person, good lighting), scrolling through their gallery to find a suitable picture rarely takes less than 3 minutes — and by then they’ve lost focus. The questionnaire has its own problem: people don’t always know their body shape or belly type. Both paths have friction we didn’t expect. We’re testing both on real people to see which one actually works for consumers.

Validation is harder than we thought. There aren’t good datasets for this use case. Model agency measurements can’t be fully trusted. The only reliable method is what we’re doing now: measuring real people by hand and comparing.

Conclusions

A commercial 3D body pipeline without SMPL is possible today — for nine cents per body. The first results show that cm-perfect measurements are within reach once you combine reconstruction with tuning. We’re actively evaluating and developing this — the pipeline will likely look quite different soon as we improve accuracy and speed. You can try it yourself. More on that in the next posts.

This is the first post in our body reconstruction series. Next up: predicting body shape from a questionnaire — no photo required.